|

This week was the final rush to finish the game. I implemented a teleport system to connect each of the three levels. I spent a few hours implementing a pause menu and moving the debug control options to this pause menu. I made also improved the look of the ‘BUSTED’ sign when the player is caught by the guard by making it world space and therefore emit light. I added a tutorial section for the grapple introduction which tilts the camera up at the grapple point. The camera was also given a reset functionality when the right stick on the controller was clicked. I spent a few hours polishing my scene and fixing bugs.

With the final vertical slice build now complete, I can say with certainty that I am proud of what this team has accomplished and I am very happy with what I contributed to it. There are a few aspects that I think went particularly well for me during this project. First, is level design. When Wellington Armageddon was approaching we essentially had nothing due to over scoping our level. I worked with Conrad and we quickly put together a level design and I created it in-engine. It was far from perfect but it was just what we needed to get the game in the hands of play testers in Wellington. The feedback from those playtesters and the ones from MDS in the same week proved invaluable to the development of our game mechanics. After Armageddon and the deadline for Vertical Slice drew closer, the team found itself in a similar state as we did not wish to use the Armageddon level for submission as it was more of a gauntlet which threw all of its mechanics at the player at once. Zac and I picked up our whiteboard markers and designed another level which introduced mechanics slower and had more context to its design. We quickly had a solid design which we did not deviate much from during development. The level was split into three scenes so each programmer could work on one. We each put a lot of time into our scenes and I am very happy with how they turned out (mine is the middle scene). Because we didn’t have much time, we used ProBuilder to create the level which proved to be very efficient. The AI turned out alright. They have some expressive animations and provide at least a basic level of challenge and engaging gameplay. I think one I the things I would like to focus on after Vertical Slice is providing more ways for the player to mess with the guards because that’s always fun. Right now they don’t really do a whole lot. There were a couple features that I made which I was also super happy with purely because they looked so pretty (or at least I thought they did). I especially love how the main menu turned out as the endless running of the characters encapsulates the game’s themes nicely. It’s also nice because it’s only about a dozen objects that keep getting pushed back and forth. The 3D text for the title turned out well because it was affected by the post processing and therefore emitted light. Also, when the lamp flew past the title text, it overlapped it which looked really neat. Another aspect that I think looks quite slick was the ‘BUSTED’ text and cell bars which slide in when the player is caught by a guard. Finally, I was quite happy with how the grapple cable turned out. It’s still a bit janky, but it’s a big improvement over what it was. The team and I also made a lot of mistakes and learnt a lot through the process. The biggest mistakes came from project management and my own stubbornness. There were certain aspects of the game that were over-scoped or cut due to the game’s mechanics changing resulting in a lot of hours that can’t be seen in the final product. One big one was using physics to control the player’s grapple arm and have it stretch towards the grapple. Dylan spent a lot of time trying to get this to work and we discussed that we should opt for a simpler implementation. Unfortunately, my stubbornness did not let me give up just then and instead I wasted a few more hours. After trying for a couple days to get it working, I conceded that it was time to give up on the physics. A similar thing happened with the character controller. Early on we had people playtesting the game and we received feedback that the movement did not feel nice. Zac suggested we use a plugin which was designed to create fun movement in platformer games. That sounded good but somehow to me, it seemed like using the plugin was accepting defeat for being bad at programming. So, I wasted another evening attempting to rewrite the movement which of course amounted to nothing and we used the plugin. I learnt two things here. 1) It’s easy to make movement in a game but it’s difficult to make movement which feels good and 2) there is nothing wrong with using a plugin which will save you time. The game’s scope and mechanics also changed significantly over time. We intended to have a larger level and built a significant amount of it. There was a light narrative weaved into the design of this level so I developed a lightweight dialogue system to convey narrative context to the player. However, this level was unfortunately scrapped because it was over-scoped and there was simply not enough time to complete it and make all the space interesting. The final Vertical Slice level had almost no narrative context and so my dialogue system was no longer necessary. It may come in handy later, we shall see. The continuous evolution of the game’s mechanics and levels was an interesting learning experience which also, unfortunately, involved a lot of hours which did not amount to anything. However, with the strong mechanical foundation we have created I expect production going forward to be smoother and more efficient.

0 Comments

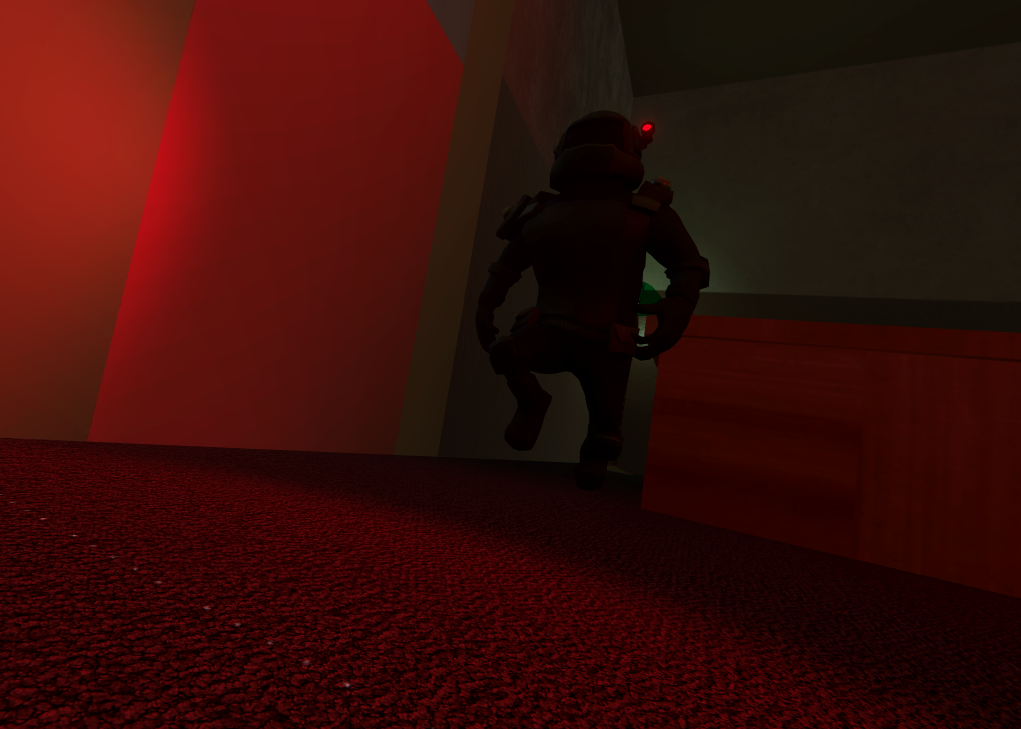

A lot of work went into the project this week and something resembling a game happened to emerge. The team went from being incredibly stressed to quite content with the game’s current state. At the beginning of the week, we were pretty satisfied with the game’s mechanics we had developed up to that point. However, we did not have a vertical slice level and the Armageddon level was designed as a mechanics playground so we did not wish to use that. Zac and I spent an evening designing a new level and I proposed that we use ProBuilder to create it as this would be the fastest option. We quickly familiarised ourselves with the tool and the next day began working on the level. We split the level into 3 scenes for a couple reasons. The first is that the programmers would be able to each work on a part of the level simultaneously. Secondly, with the vertical slice deadline approaching, we did not have enough time to significantly optimise and therefore decided that we would have smaller scenes with greater detail than one larger scene with less detail. I created the middle scene of the level. The goal of my scene was to challenge the player with moving platforms, introduce multiple guards in a room including a lookout guard and introduce the grappling mechanic. The moving platforms are positioned around a large stack of crates. On top of the crates is a trophy collectable (I will explain this later). This trophy is visible to the player when they enter the room but it can only be accessed by jumping across the moving platforms and climbing onto the stack of crates from the back. This trophy presents only a small amount of challenge. After the moving platforms, the player is led through a corridor to the next room. The next room is much darker and resembles a mysterious office after hours. Two guards patrol this room. One is doing the rounds on the office floor and another is perched on a balcony. The guard on the balcony cannot chase the player if he is alerted to the player’s presence. Instead, his role is to alert his buddy on the ground with the AI’s shout system. The player must time their movement with the balcony guard’s facing to cross the room to the hallway on the far side unnoticed. This room features desks which allow the player to take advantage of the character’s small size as the character can fit underneath these desks and evade detection. Under one of these desks is a trophy. The player is drawn to the hallway on the far side by a bright light. Once the light is reached, the player must climb up some crates and enter an air duct where they will emerge in the next room. The final room for my scene is the introduction of the grappling hook. Similar to the Armageddon level, the grappling hook is first used in an area where the player cannot die as there is a floor underneath to prevent the player from falling. After this, the player must grapple across two grapple points with an endless floor below. There is also another opportunity for a trophy in this room for more advanced players. Players can swing to a balcony on the side and clamber across some pipes to retrieve the trophy. The player then lands on a platform after the two grapple jumps and enters a door which commences the third part of the level. I put a lot of hours into my scene and I’m very happy with how it turned out. We decided to make the character run when a button is held rather than require the player to gently push the stick for long stretches when stealth is required. It also more clearly defines the states between sneaking and running. When you are running it is now very obvious that you are making noise. As I mentioned before, I added trophies into the game as loot which supplements the money earned from pickpocketing guards. I reused the trophy model from the prototype, tweaked the colour values, made it spin and added a particle effect and voila: a trophy collectable. It also does a little dance when you pick it up. This week for the guards I made a couple changes. Firstly, I changed how the load checkpoint function works so that it will always put the guard back to its position at the start of the scene and reset its state too. This is because if a guard was right next to a player when the game was saved and then loaded the guard would keep killing the player. Secondly, I made the guard’s flashlights change colour to reflect the state that they are in. If they are unaware, they have a yellow flashlight and if they are chasing the player or searching for the player, their flashlight becomes red. Conrad made an emissive map for the guard which lets the flashlight bulb also emit light and I made sure to pass the correct colour to this as well. This week I also built the main menu for the game which currently only has two options: start and exit. It features the main character endlessly running along a hallway while being chased by a guard. The characters aren’t actually moving: they are just stuck in the run animation. An overhead light, crates and the walkway beneath them all move to give the illusion of the characters running. The overhead light and walkways work by being moved along past the player then having their positions pushed back when they reach a threshold to give the illusion of the objects moving endlessly. I wanted the crates to have a little more variance so I create them at runtime, move them along and destroy them when they’ve reached a certain threshold outside of the camera's view. I randomised the spawn interval, crate layout and which side they spawned on. I am super happy with how this menu turning out as it looks great and captures the theme of the game nicely. Finally, I spent a few hours producing a more visually interesting death text. When the player is caught now, the gameplay freezes, the bloom slightly intensifies and a soft red and blue flashing light appears. A cell door rolls in from the right of the screen and the word ‘BUSTED’ appears in front of the cell door. At this point, the player must press ‘A’ or ‘SPACE’ to reload to the previous checkpoint. I made the cell door in illustrator and gave the illusion of depth using gradients. I’m overall quite happy with how this turned out, however, I think the text could look more interesting.

Through the playtests at Media Design School and Wellington Armageddon, we accumulated a long list of valuable feedback. We received affirmation that the mechanics we had developed were fun, but we knew we had a long way to of refining them to make them really feel good. The thing that almost all playtesters found the most fun was the grapple hook but even that needed lots of work to improve it. For the programmers, this week was all about taking the mechanics we currently have and refining and iterating on them.

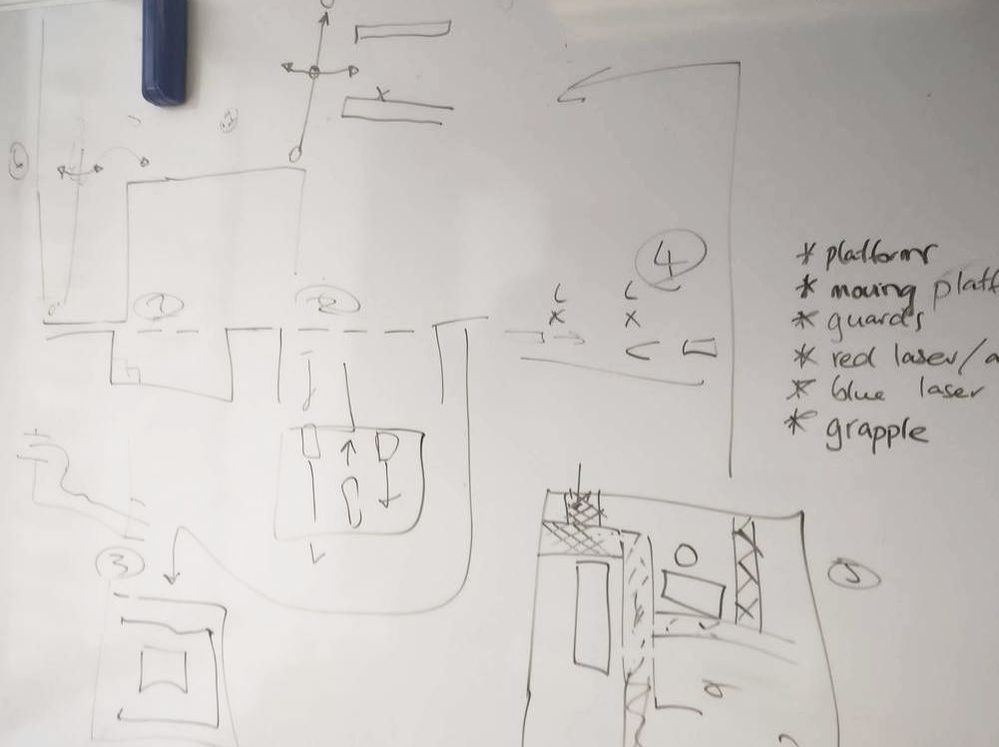

For me, the primary refinement concerns were again the camera – which was felt by many players to have been clunky – and the guards – who had the IQ of a bag of hammers and sometimes became stuck while searching for the player. I needed to implement a camera hint system so that when the player first encounters the grappling hook, for example, the camera gently tilts up to show it. This is because it is very difficult to make players look up. I had implemented something similar to this while making the dialogue system which orientated the camera towards a particular target and zoomed in. I couldn’t just use that dialogue camera system though because that didn’t support automatically tilting the camera to face a higher or lower target; it could only swing the camera around horizontally. Additionally, a weight value needed to be applied to this system so that the camera didn’t move to focus the grapple point directly in the middle of the screen, but rather ‘pushed’ it towards the centre by a weight value. Also, it needed to not zoom. The horizontal camera movement was easy to implement. I encountered some difficulties with the vertical camera movement, however, as it initially seemed to immediately face downwards when trying to focus on the grapple. I eventually improved it but it still didn’t work in every situation. I will have to revisit it next week. I will also need to continue refining the camera’s autorotation to be smoother. I reworked the AI this week to be a little more competent. The guards can now shout at their nearby buddies for help if they are chasing the player. I also fixed a big which made guards get stuck during the search state when their alert time reached 0 and they should have been heading back to their patrol route. The guards were getting stuck because the detection level didn’t go down to 0, which is the trigger to switching to patrol state. I compared detection level to epsilon in this check instead. I also improved our AI pathing tool to select whether we want the guard to loop or ping-pong along his path points. I also reworked the exclamation marks from the prototype and made them work with our guard AI. This makes it very easy to see what the guard is doing how close the guard is to detect the player. When the guard is standing in place while trying to find the player, it is now much easier to understand that he is searching because we can now see the detection meter ticking down. I also wrote some code for getting the player’s delta angle rotation to use for Juane’s turning animations for the player. This week was a busy week of big decisions and lessons learnt. I spent the first day of this week working in our prison level, creating events which would be triggered during gameplay. This included making the guard walk the player to the exercise yard and an intricate series of events which play out when the player first acquires the mechanical arm. The player picks the arm off the table which triggers glass walls to rise and alarms to sound, trapping the player. The player is taught how to use the grapple at this point and must use the newly acquired arm to swing up and out of the glass cage to a catwalk. As the player leaves the room through this catwalk, a number of guards can be seen below running towards the sound of alarms in the room the player just left. This was a cool moment! But we had a problem. The Armageddon convention was in just a few days and we didn’t have a game. We had a giant level which was filled with crates and columns and platforms but it didn’t support interesting gameplay and it was too large to finish it to high quality before vertical slice. After discussion with the team, we decided we should focus on building a level which was much more streamlined and focused on demonstrating all of the game’s mechanics; like a playground combined with a gauntlet. This was the perfect thing to let people quickly get a feel for the game’s mechanics (useful for playtests) and was significantly more feasible to complete during vertical slice. Despite Zac and Corne having spent a great many hours on this prison level, we knew it was the right decision to put that to the side for now. I worked with Conrad to quickly map out a layout for a linear level which would demonstrate all the mechanics with what we thought would be a natural progression in difficulty. We drew it all out on a whiteboard before I began grey-boxing it in the engine. The basic layout of the level took a number of hours to implement, but it was already apparent the benefits the gameplay would receive from it. I combined my level with 2 elements that Dylan had added into his own level. This was a series of moving platforms and grapples and a section which taught players about vents by blocking the player’s progress with a laser wall. The outline for the level is as follows:

I added a grapple playground at the end for the purposes of playtesting to give players more time to swing around between multiple connecting grapple points while avoiding pursuing guards on various platforms. I made an electricity particle effect for the blue lasers when the player comes into contact with one. I also experimented with what should happen to the player on contact. Initially, I thought freezing the player was a good idea because if the player got shocked and stunned by a laser on a moving platform, that platform could move out from under the player. However, this did not work well for walls of blue lasers as the player could get stunned multiple times and become stuck for a while. I changed the laser to push the player away on contact instead. This week I gave some sorely needed attention to the guards. I implemented the animations and make them react to sounds in the scene such as the player’s movement or alarms going off. This needs some refinement however as the guards have too much trouble hearing alarms. I also spent a little time on the camera, making it much smoother. Another important lesson learnt was from the time I spent working on a new character controller. I spent an entire evening and some of the next morning working on a new character controller after having some feedback that the current controller didn’t feel too great. I did another playtest with the I controller I had just built and feedback was slightly more positive but it still didn’t feel great. I knew that if I wanted it to feel great, it would take a lot more time. We ended up buying a plugin and we should have done this much earlier. Finally, I added in a variety of debug information and commands for the Armageddon play test. This included FPS, camera X and Y inversion, camera sensitivity and camera auto rotation. These values can be tweaked while playing. This was an important addition as players all have different preferences for how they want the camera to control. This was another extremely productive week for me. I built a dialogue system, an event manager, and improved many game features. The first task I performed was hooking up Zac’s laser and alarm panel scripts to my alarm light script so that alarm lights would be set off once the player collides with a laser. Once I had learnt how to properly apply lighting in Unity, the alarm lights created a nice effect, with the red and blue lights bouncing off other surfaces. I added light probes and reflection probes to a small part of the scene to learn how it works. I created a metal material for the air vents which has a reflective quality to it. It looks delicious and I love it very much. The dialogue system was necessary to give the player information from NPCs in the game world since voice acting was out of scope for this project. To make an NPC able to have a conversation, we now simply only need to add a ‘Chatbox’ prefab as a child to the NPC and specify which text file to read the conversation from. The ‘Chatbox’ prefab has a trigger collider on it which initiates the conversation once the player enters the collider. The camera zooms into an over the shoulder angle, looks at the NPC and player movement is locked. The player then presses ‘A’ to progress through the dialogue sequence. The dialogue system supports multiple conversations with a single NPC existing in one text file. A new conversation must be preceded with the tag ‘—BEGIN’ for the system to correctly register as a new conversation. The first prison guard the player meets has multiple conversations. When the player first meets this NPC, there is a conversation in which the player is told to follow the guard to the exercise yard. Another conversation is triggered upon arrival at the exercise yard which instructs the player on what to do. The other tags used in the text file are ‘—PLAYER’ and ‘—NPC’ which determine who is saying the following lines. This is used so that we can show the name of the character that is currently speaking and give the text a colour specific to that NPC. This is all shown in-game on a box which only appears during dialogue. An example of a chat file: --BEGIN --NPC Okok steal the key. Easy as, guard is dumb. --Player ??? --NPC So long I knew there was going to be a lot of events being triggered throughout the prison level. I wanted a clean way of triggering these events without cluttering up existing scripts unnecessarily. To solve this, I created an event manager which stores and invokes functions which are stored in a dictionary with a string key. A script can request that the event manager starts listening to a function, and this function can then be triggered from any other script because the event manager is static. This avoids having to constantly find objects or get components. I incorporated this event system into the dialogue system so that an event can be triggered once a conversation is completed. The ‘Chatbox’ script simply takes a string which is the string key for the function value in the event manager’s dictionary. For example, when the player finished talking to the prison guard for the first time, an event is triggered which makes the prison walk the player to the exercise yard.

For many situations, a more generic way of triggering events would be helpful. I created a script which invokes a function based on a trigger collider. With this script, I can specify the string for the event I wish to invoke and a string for the tag of the object that I want to enter the collider. For example, I have an instance of this inside the prison exercise yard area. It checks for the ‘Guard’ tag, and when the prison guard enters the collider, a function is invoked which readies the NPC for the second conversation. The prison guard has a script on him which holds this function. I found that this was a good way to set up events because it means that although the level layout is still likely to change, all I need to do is adjust the collider locations to make it function correctly. I made further improvements to the camera this week. I added a zoom button on the up button which uses the same zoom function as the dialogue system. However, the biggest change I had was making the auto-rotation much less aggressive, and the movement smoother. The camera no longer always tries to move behind the player. Instead, there is a couple second delay of the player not moving the right analogue stick before the camera slowly tries to move behind the player. I also made changes to the free walk zone so that the player is no longer switching between the inside and outside of the free walk zone, which made the camera movement janky. I reworked and cleaned up the inventory system which hadn’t been touched since the prototype. It stores items in a list and now provides static functions for adding items and checking if an item exists in the inventory. This is used for the door and keycard system. I extended Zac’s button script to allow for a required object to be passed in. So if a door, requires a keycard to be opened, that keycard can be added to the required object field on the button before it will activate the door. Lastly, throughout the week I completed lots of little tasks. I cleaned out lots of legacy code and assets. I made the list of controls accessible from anywhere so we don’t need to keep accessing the input manager. I made the stealth AI slightly less silly and fixed some bugs with how the stealth was working with the sound detection system. I also worked on the GDD throughout the week. This was an extremely productive week for me. I made strong progress in many areas of the game including AI, camera, the grapple mechanic, alarms and player movement. I built on the camera auto rotation mechanic I was working on in week 2. This involved using ray casts extruding from the player to anticipate occlusions of the player and swing to avoid them. I fixed this by making the direction of the rays relative to the camera’s back vector rather than the player’s. The rays should be relative to the camera because the camera is the object that needs to swing around, not the player. I also implemented a weight system to supplement this. This weight system gave a greater influence to rays that were closer to the edge of the set of rays. With this weight system, the camera could better react to sharp turns. Additionally, if the player walks between 2 pillars, the weights will act so that the camera is pushed behind the player and then will cancel out. To further improve the camera system, I implemented a free walk zone. This is a small zone in which the player can move freely and the camera will not follow. This makes the camera appear less jerky by ignoring small movements. After some trial and error, I also developed another auto rotation mechanic for the camera which aligns the camera behind the player when the player is not touching the right analogue stick. The purpose of these automatic camera movements is to make the game playable without having to manually rotate the camera, which should only be necessary to give the player more precise control. Navigating narrow corridors or grappling across chasms could become quite difficult if players are constantly having to manipulate the player character and the camera. I still need to do a lot more work in this area to make the camera smoother and more predictable. The AI were given a more effective means of searching for the player after losing sight. Previously it would be easy to avoid a pursuing guard by dashing around a corner. But now, guards will go to the player’s last known location and sweep left and right to search for the player, making them much more effective. I also worked with Zac on the more robust visual detection function which works by passing in transforms for the player character’s head, hands, feet etc. The function then determines what percentage of these is visible by the guard and uses this percentage to scale how fast the detection meter fills. Zac did most of the coding for this, but I made it integrate with the other parts of the plugin. For the security system, I thought it would be neat for the alarms in the level to match the flashing red and blue lights that Conrad’s guard will use when chasing the player. I quickly threw together an alarm light which swirls around and can be triggered from a script. For a stealth game, some might argue that it is beneficial for the player character to have the ability to sneak. Therefore, I implemented crouch functionality. But first I had the cleanup the player movement script which had become messy and bloated from poor coding standards and legacy code. I took some time to clean the script up before implementing the crouch. The crouch scales down the player’s capsule collider and currently also scales down the player to simulate crouch since we don’t have the appropriate animation yet. If a player jumps, crouch is immediately deactivated, and crouch cannot be activated while grappling.

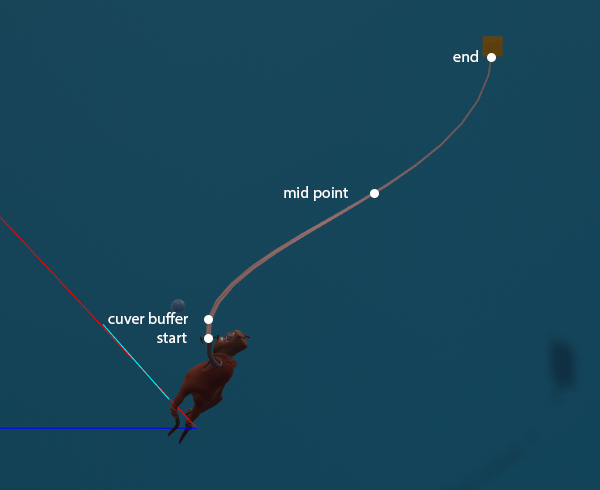

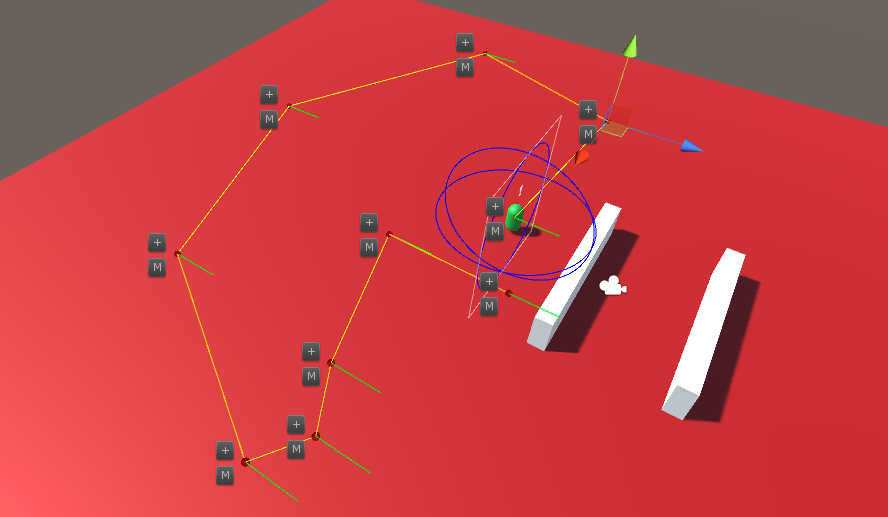

Finally, I spent a significant amount of time working on the grappling system in the game. Too much time. Initially, we wanted the robot arm to be physics based and move towards the grapple points. This mechanically worked but unfortunately looked incredibly janky. After experimenting with joints, I attempted using inverse kinematics on the arm (very difficult on a non-humanoid rig with 20 bones on its arm) and even attempted steering to drive the arm bones towards a target. During some attempts, we managed to get the positions of the bones correct but not the rotation, resulting in the arm looking flat in some directions. Eventually, it was time to call it quits on the dynamic arm; it was simply eating too much time. We decided to opt for a traditional animation approach instead. The arm would move up towards the character’s head and a cable would shoot out from the hand to the grapple point. I created a cylinder object that could scale, rotate and position itself between 2 points. I set up texture scaling on this cylinder so that as the cable stretched out, the textures would correctly tile themselves. I shifted the anchor point of the joint between the grapple point and the player to be on the player’s hand. Scott game me animations to test for walking, idle, jumping and grappling. Grappling looked great with the new animation. However, I knew it could still be better. I ditched the cylinder in favour of a line renderer. Initially, it looked almost identical. But using a line renderer gave me more control, such as adjusting the weight curve for the line. I thought about how the line renderer could give the cable a curved look as if bending relative to the player character’s velocity. I added a third point which had a height between the start and end points, and its x and z were lerped towards the grapple point to simulate the bend. I then passed these points through a smoothing function which adds additional points to make the line curve smoother. After testing the mechanic, I further improved it by adding one more point just after the start point so that the cable must first come out of the hand straight before it can start curving away towards its target. This prevents clipping with the claw and created an effect that I am very satisfied with.  A ray cast has hit a wall before the player character has been occluded, giving the camera an opportunity to swing away from the wall. A ray cast has hit a wall before the player character has been occluded, giving the camera an opportunity to swing away from the wall. During week 1, we had developed a clear outline of what we aimed to achieve for the vertical slice. During week 2, the discussions on the game’s direction scaled down and it was full steam ahead for production. My two priorities for this week were the AI pathing tool and improvements to the game's camera. The prototype for the game only had players traversing the environment on a 2D plane. With the introduction of platforming elements and increased player mobility, the camera needed to be updated to accommodate this. I added vertical movement to the camera to give players more control. The smoothness of the camera’s movement was also improved by making the camera a separate object, and not a child of the player character as it previously was. By doing this, I could have the camera follow the player character and ignore the small movements the player may make when moving the player character, which could make camera movement jerky. I made an update to the camera’s follow behaviour which only followed the player character when the player character was grounded or falling. This was done because having the camera follow the player character upwards every time they jumped is distracting. Of course, it was also important to make sure the camera didn’t clip through walls and objects. A clipping prevention check was implemented in the prototype but it is now more robust as it using a sphere cast instead of a ray cast to check for occlusion. I also attempted to implement a camera swing mechanic to improve the smoothness of the camera. This is used in 3rd person games such as Journey and Ratchet and Clank and occurs when a player is about to turn a corner and become occluded from the camera. Rather than pushing the camera directly in front of the wall, which can be jarring if done too quickly, the camera anticipates the occlusion and swings around to maintain the view of the player. This is commonly implemented using a series of ray casts directed from the back of the player, at equal distances apart. When one of the rays detects an object, the camera should swing to counteract it. The difficulty came in defining the rules that determine when the camera should swing. Of course, it some situations it does not make sense for the camera to swing even when a ray hits an object e.g. if the player is facing away from the wall. This feature is approximately halfway complete and I will continue developing it in week 3. During the first week, I had learnt enough about Unity’s Editor API to build a simple and intuitive tool for mapping the AI’s patrol paths. The system I implemented creates a series of empty objects which hold a script that defines the AI’s behaviour along each point of the path. The tool lets us set the position of each point, define how long the AI agent should wait at that point, and what rotation they should look towards if the agent is waiting. I added a button to the inspector of the path point script which creates a new point after the selected point and automatically selects it. I made this process even easier by making a GUI button which is drawn in the editor scene view next to each point, which holds a ‘+’ symbol and adds a new point at that location when clicked. This simple addition makes using the pathing tool more efficient for the team because we don’t need to be constantly clicking back and forth between the scene view and inspector. I ran into an issue while developing this tool due to how gizmos work in Unity. I use gizmos to show the position of path points (they are empty objects) and the connections between these points. Older versions of Unity let users click on gizmos in the editor to select the object they were drawn by. Unfortunately, gizmos no longer work this way and after much trial and error I gave up on making the gizmos clickable. Instead, I elected to use another GUI button in the scene view, just like the ‘+’ button which allows us to easily select and move any point. I updated the AI controller script to use the points created in the path editor. The AI agents follow the points, and if the point has a wait time, the agent will wait and rotate to the specified direction (the direction is represented by the green lines). The AI’s states were fleshed out to better handle reacting to the player. When an AI agent sees the player while patrolling, they will begin chasing the player. If the agent loses sight of the player, the agent will travel to the player’s last known location and search from there. This last known location is static, so all AI can have access to this information as if they are talking to each other. The values for alert time and search radius can be set outside of the script. The AI agent will keep generating random points on the NavMesh within a search radius in front of the player’s last known location and travelling to those points until the alert time has expired or the player is found. If the alert time expires, the detection level will reset and the agent will return to their path.

The speed of the AI agent varies. While patrolling, they walk at a regular speed. While chasing the player they will speed up. And when they are searching they will be slower, as if they are searching very carefully. I will make these values editable outside of the script to use for testing. Finally, I also helped other members of the team with a few problems. For example, I improved the input for running along ropes in Dylan's script. This implementation compares the analogue stick input in relation to the camera's forward vector to the direction of the rope. This means that the player can push the analogue stick in the direction of the rope irrespective of which direction the camera is facing to traverse. There is a 30-degree leeway to this, so players don't need to be perfectly accurate. I also helped Zac set up an editor script for his laser system, which will later make our jobs easier. During the first week of the vertical slice, a significant amount of time was spent discussing the direction of the game. There was no shortage of ideas being tossed around, but we eventually nailed down the core mechanics that we would develop. A light narrative around the character's origins was also established to give context to the gameplay and level designs. The team decided to shift the focus more towards the stealth aspect of the game, rather than the concept of letting players steal everything in sight.

Due to the focus on stealth, I began working on the scripts that would be used for handling the AI of the NPCs. I created a DLL plugin which held scripts which could be placed on any object. These scripts would give the object the AI controller to move around and react to the player. Only a simple visual detection mechanic was implemented. It works by calculating the direction vector from the AI agent to the player. This direction vector is compared to the agent’s forward vector and if the angle between the 2 vectors is within the agent’s field of view, the second check is carried out. This check is a ray cast from the agent to the player character to determine if an object is occluding it. These simple checks will need to be further developed to allow for more robust visual detection. Currently, it only ray casts to the centre point of the player character. This means that the player character could have almost half of its model not occluded by a wall but it would still be invisible to the AI agent due to its central point being behind the wall. This needs to be developed to determine an approximate percentage of how much the player model is visible, which will affect how fast the detection level will increase. Our team can edit the values for the NPC's visual and audial perception, and field of view in the inspector. Another script in the DLL allows us to automatically create detection meters on the object which communicates with the AI controller script. This detection meter can be edited in the inspector, without having to access the detection meter’s canvas. The detection meter fills up to show how aware an NPC is of the player and can be used with any sprite. When the detection meter is full, the NPC will chase the player. The detection meter script has been set up in a way that allows the team to easily switch out the sprites. However, the NPC is not required to have a detection meter, it is merely a representation of the data held in the AI controller script. I've also begun work on the path editor. I experimented with scriptable objects, custom editor windows, and tree view windows. However, it was difficult to create an implementation that was intuitive and easy to use. During the second week, I will focus on developing the tool to be simpler. |

AuthorContrary Scholars ArchivesCategories

All

|

RSS Feed

RSS Feed